Fine motor skills for robotic hands

Tying shoelaces, stirring coffee, writing letters, playing the piano. From the usual daily routine to demanding activities: Our hands are used more frequently than any other body part. Through our highly developed fine motor skills, we are able to perform grasping movements with variable precision and power distribution. This ability is a fundamental characteristic of the hand of primates. Until now, it was unclear how hand movements are planned in the brain. The most recent research project of Stefan Schaffelhofer, Andres Agudelo-Toro and Hansjörg Scherberger from the German Primate Center has shown how different grasping movements in the brain are controlled in rhesus monkeys. Using electrophysiological measurements in those areas of the brain that are responsible for the planning and execution of hand movements, the scientists could predict a variety of hand positions through the analysis of exact neural signals. In initial experiments, the application of decrypted grip types was transferred to a robot hand. The results of the study will be incorporated in the future development of neuroprostheses, which will be used to enable paralyzed patients the recovery of hand functions (The Journal of Neuroscience, 2015).

"We wanted to find out how different hand movements are controlled by the brain and whether it was possible to use the activity of nerve cells to predict different grip types", says Stefan Schaffelhofer, neuroscientist in the Neurobiology Laboratory of the DPZ.

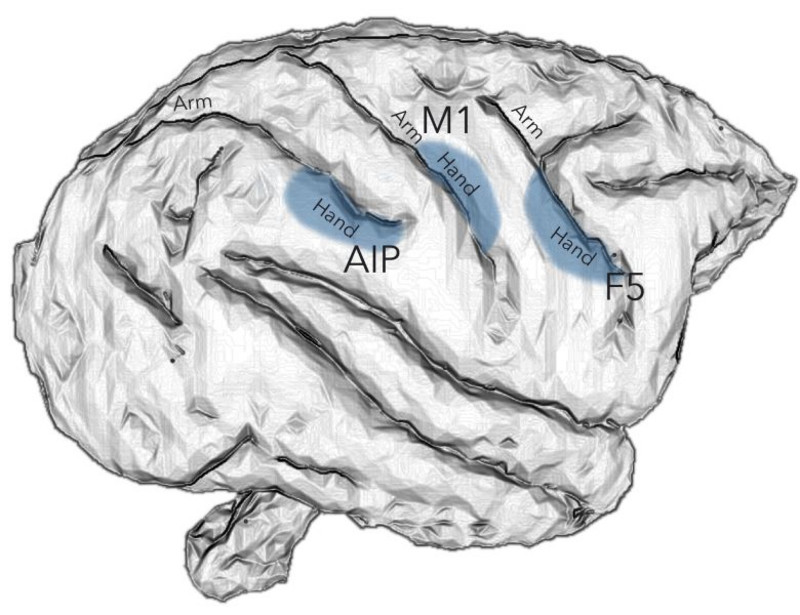

Within the framework of his PhD thesis, he intensively dealt with those brain areas of the cerebral cortex, which are responsible for the planning and execution of hand movements. In the course of his studies, he found out that visual information for objects that can be gripped, especially their three-dimensional shape and size are mainly processed in the AIP region. The conversion of the visual characteristics of an object into corresponding movement commands is mainly controlled in the areas F5 and M1.

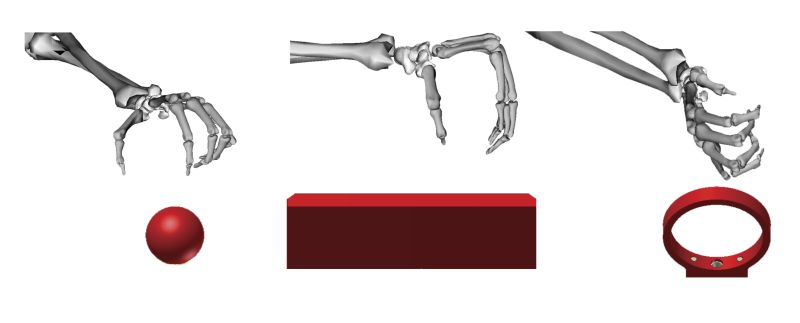

To investigate the regulation of various grip movements in these regions of the brain in detail, the activity was recorded from neurons with so-called multi electrode arrays. The researchers have trained the rhesus monkeys to repeatedly grasp 50 objects of different shapes and sizes. In order to identify the grip types and to compare them with the neural signals, an electromagnetic data glove was used to record all the finger and hand movements of monkeys.

“Prior to the start of a grasping movement, we have illuminated all the objects so that the monkeys could see them and recognize their shape," said Stefan Schaffelhofer. "The subsequent grasping movement then took place in the dark after a short delay. We were then able to separate the responses of the neurons to the visual stimuli in motor signals as well as examine the phase of motion planning."

Based on the activity of nerve cells measured during the planning and execution of the grasping movements, the scientists could subsequently draw conclusions on the applied grip types. The predicted grips were compared to the actual hand configurations in the experiment. "The activity of the measured brain cells is strongly dependent on the grip that was applied. Based on these neural differences, we can calculate the hand movement of the animal" says Stefan Schaffelhofer. "In the planning phase we predicted hand configurations with an accuracy of up to 86 percent and 92 percent for the gripping phase."

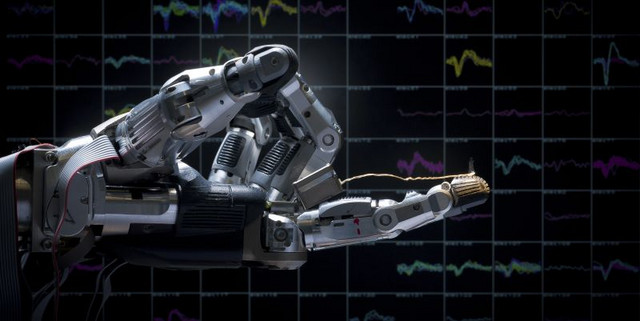

The decoded hand configurations were subsequently successfully transferred to a robotic hand. With this, the scientists have shown that a large number of different hand configurations can be decoded and used from neuronal planning and execution signals. A finding that will be of great importance especially for paraplegic patients where the connection between the brain and limbs no longer functions.

"The results of our study are very important for the development of neural-controlled prosthetic hands. They show where and especially how the brain controls grasping movements", Stefan Schaffelhofer summarizes. "Unlike other applications, our method allows a prediction of the grip types in the planning phase of the movement. In future, this can be used to generate neural interface to read, interpret and control the motoric signals.”

Original publication

Schaffelhofer, S., Agudelo-Toro, A. and Scherberger, H. (2015): Decoding a wide range of hand configurations from macaque motor, premotor and parietal cortices. The Journal of Neuroscience, 35(3):1068-1081